March 2026 did not introduce any major or immediately actionable regulatory changes for AI in healthcare across Canada, the European Union, or the United Kingdom. Activity in these regions remained relatively stable, with no significant new guidance, enforcement shifts, or legislative developments directly impacting AI-enabled healthcare systems during this period.

In contrast, the United States saw continued regulatory engagement, particularly through stakeholder discussions and clarification efforts led by the U.S. Food and Drug Administration (FDA). These updates focused on refining existing frameworks rather than introducing entirely new regulatory structures, with implications for AI-enabled clinical decision-making tools and medical device oversight processes.

United States

1) FDA – Clinical Decision Support (CDS) Software Guidance Engagement (March 11, 2026)

Category: Regulatory clarification / implementation guidance

On March 11, 2026, the FDA held a formal town hall session to discuss its final guidance on Clinical Decision Support (CDS) software. The session focused on clarifying how the agency interprets and applies regulatory boundaries between software functions that qualify as medical devices and those that do not.

The discussion centered on the distinction between:

- Non-device CDS software, which is excluded from FDA medical device regulation, and

- Device CDS software, which remains subject to regulatory oversight

The FDA reiterated that software may be excluded from regulation if it meets specific criteria, particularly where:

- The software is intended to support or provide recommendations to healthcare professionals (not replace them)

- The basis for the recommendations is transparent and can be independently reviewed by the clinician

- The clinician is able to understand how the software arrives at its output

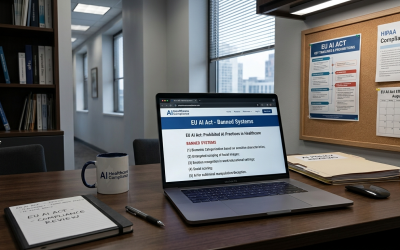

Conversely, software that:

- Produces outputs that cannot be independently evaluated by the user

- Relies on complex or non-transparent models (including certain AI/ML approaches)

- Influences clinical decisions without sufficient explainability

may fall within the definition of a regulated medical device.

The town hall also emphasized how the FDA interprets “independent review” and “transparency,” which are key criteria for determining whether CDS software remains outside regulatory scope.

How it applies to AI in Healthcare:

This guidance is particularly relevant for AI-enabled clinical tools because many AI systems:

- Use complex models that are not inherently interpretable

- Generate probabilistic or pattern-based outputs rather than rule-based recommendations

As a result, AI-based CDS tools are more likely to fall into the regulated category unless they are specifically designed to:

- Provide explainable outputs

- Allow clinicians to independently validate the reasoning behind recommendations

This reinforces a key regulatory principle:

Explainability and human interpretability are central to determining regulatory classification.

For developers, this creates a practical design implication — systems that prioritize transparency and clinician oversight may reduce regulatory burden, while more autonomous or opaque systems are likely to face full medical device requirements.

FDA – Town Hall – Clinical Decision Support Software, Final Guidance

2) FDA – MDUFA VI Reauthorization Stakeholder Meeting (March 11, 2026)

Category: Regulatory process / policy development

Also on March 11, 2026, the FDA conducted a stakeholder meeting as part of the reauthorization process for the Medical Device User Fee Amendments (MDUFA VI). This process involves gathering feedback from industry, regulators, and other stakeholders on how the medical device regulatory framework should evolve for the next authorization cycle.

The meeting addressed several aspects of the medical device regulatory system, including:

- Review timelines and performance goals

- Pre-market submission processes

- Post-market oversight and monitoring

- Resource allocation and user fee structures

Stakeholders provided input on how the FDA can improve efficiency, predictability, and transparency in its review processes.

While the discussion was not limited to AI, AI-enabled medical devices were implicitly included within scope, as they are regulated under the same frameworks. Therefore, any changes to:

- Review timelines

- Evidence expectations

- Regulatory processes

will directly affect AI-based healthcare technologies.

How it applies to AI in Healthcare

For AI in healthcare, the MDUFA VI process is significant because it shapes the operational environment in which AI medical devices are reviewed and monitored.

Key implications include:

- Potential changes to review timelines, which could affect time-to-market for AI tools

- Evolving expectations for evidence, particularly around real-world performance and post-market monitoring

- Increased emphasis on regulatory predictability, which is critical for AI developers navigating complex approval pathways

Although no immediate regulatory changes were enacted, the stakeholder feedback process indicates that:

- AI-related regulatory challenges (e.g., lifecycle updates, adaptive systems, performance monitoring) are being considered within broader device policy discussions

- Future iterations of MDUFA may indirectly shape how AI systems are evaluated and maintained over time

This reflects a broader trend where AI is not always regulated through standalone frameworks, but rather through adaptation of existing medical device systems.

FDA – Industry MDUFA VI Reauthorization Meeting

Cross-Cutting Themes

1) Emphasis on Clarification Over New Regulation

Regulators continue to refine and interpret existing frameworks rather than introduce entirely new AI-specific rules.

2) Central Role of Explainability

The CDS guidance reinforces that transparency and interpretability are key determinants of regulatory classification, particularly for AI systems.

3) Integration of AI into Existing Device Frameworks

AI is being governed within established medical device regulatory structures, rather than through separate, AI-specific regimes.

4) Importance of Process Efficiency and Predictability

Through MDUFA discussions, there is a clear focus on improving regulatory timelines and consistency, which is critical for emerging technologies like AI.

5) Lifecycle Oversight Considerations

Although not explicitly new, discussions around post-market monitoring and performance suggest continued attention to ongoing oversight, which is especially relevant for adaptive AI systems.

Key Considerations for Regulatory Alignment

For Founders & Business Owners

- Design decisions influence regulatory classification

AI systems that prioritize explainability and clinician interpretability may reduce regulatory complexity compared to opaque models.

- Expect full medical device oversight for most AI-driven clinical tools

Particularly where models are complex or outputs are not easily explainable.

- Plan for regulatory timelines early

Engagements like MDUFA signal that review timelines and expectations remain a critical factor in go-to-market strategy.

- Monitor evolving FDA expectations

Even without new laws, guidance interpretation (e.g., CDS criteria) can materially impact product classification and compliance requirements.

- Account for post-market responsibilities

AI systems may require ongoing monitoring, updates, and performance validation after deployment.

AI systems that prioritize explainability and clinician interpretability may reduce regulatory complexity compared to opaque models.

Particularly where models are complex or outputs are not easily explainable.

Engagements like MDUFA signal that review timelines and expectations remain a critical factor in go-to-market strategy.

Even without new laws, guidance interpretation (e.g., CDS criteria) can materially impact product classification and compliance requirements.

AI systems may require ongoing monitoring, updates, and performance validation after deployment.

For Compliance & Regulatory Specialists

- Carefully assess CDS classification criteria

Particular attention should be given to:

- Transparency of outputs

- Ability for independent clinical review

- Degree of reliance on AI/ML models

- Document explainability and user interpretability

Evidence demonstrating how clinicians can understand and validate outputs will be critical for classification decisions.

- Prepare for evolving review expectations

MDUFA discussions suggest continued refinement in:

- Evidence requirements

- Review processes

- Performance evaluation standards

- Strengthen post-market surveillance frameworks

Even in the absence of new rules, regulatory focus continues to include real-world performance monitoring.

- Align with broader device regulatory processes

AI compliance should be integrated into existing medical device regulatory strategies rather than treated as a standalone domain.

Particular attention should be given to:

- Transparency of outputs

- Ability for independent clinical review

- Degree of reliance on AI/ML models

Evidence demonstrating how clinicians can understand and validate outputs will be critical for classification decisions.

MDUFA discussions suggest continued refinement in:

- Evidence requirements

- Review processes

- Performance evaluation standards

Even in the absence of new rules, regulatory focus continues to include real-world performance monitoring.

AI compliance should be integrated into existing medical device regulatory strategies rather than treated as a standalone domain.

Disclaimer: This checklist is provided for general informational purposes only and does not constitute legal, regulatory, or professional advice; organizations should consult with their legal and compliance departments to ensure adherence to specific jurisdictional requirements.

Sources

- FDA – Clinical Decision Support (CDS) Software Guidance Engagement (March 11, 2026)

https://www.fda.gov/medical-devices/medical-devices-news-and-events/town-hall-clinical-decision-support-software-final-guidance-03112026 - FDA – MDUFA VI Reauthorization Stakeholder Meeting (March 11, 2026)

https://www.fda.gov/media/191759/download